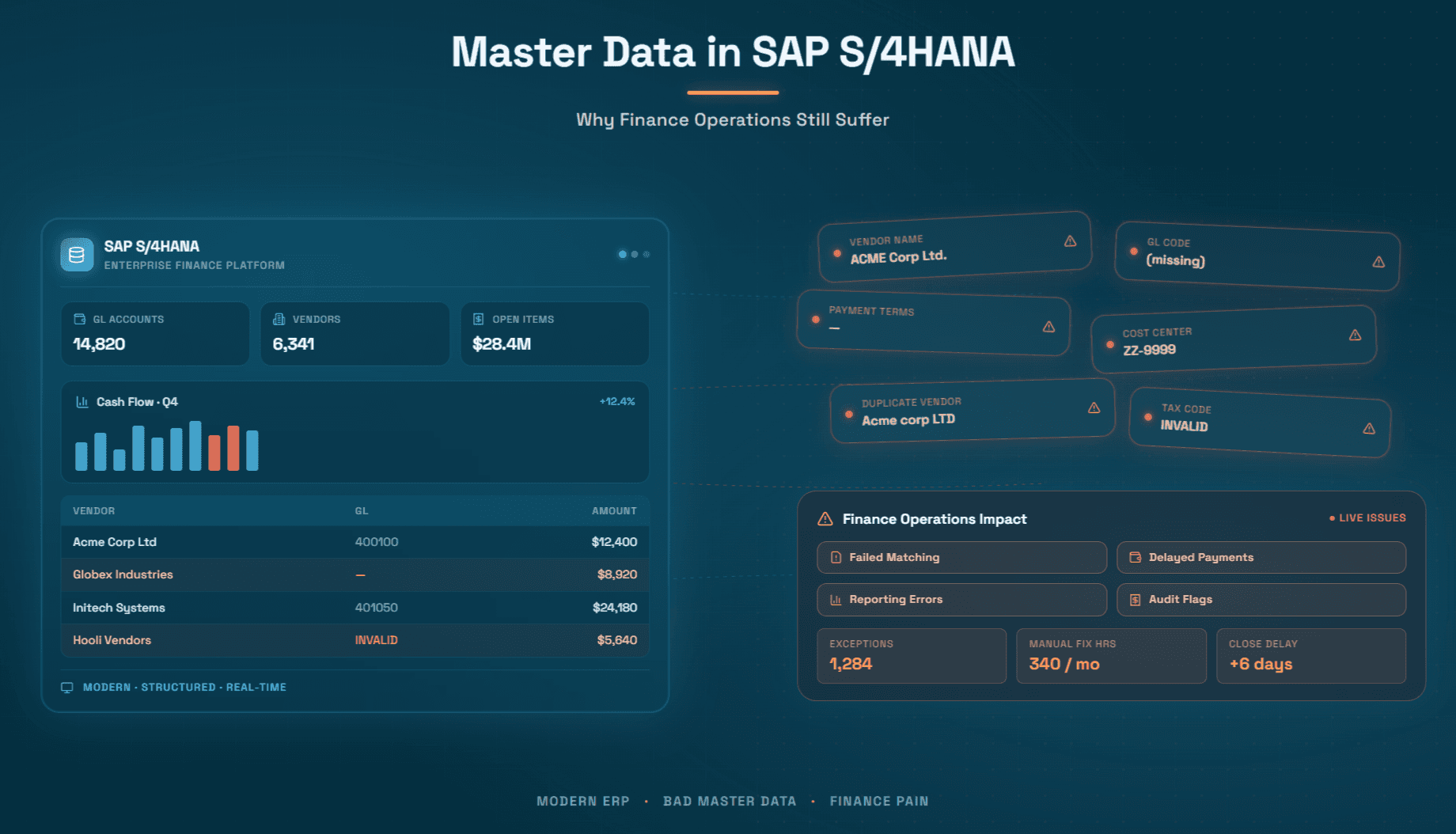

Master Data in SAP S/4HANA: Why Finance Operations Still Suffer

Why vendor, customer, and chart of accounts data remain hidden blockers to finance efficiency

Every year, finance teams in large and mid-market enterprises invest heavily in SAP S/4HANA migrations, expecting cleaner processes, faster closes, and better visibility. And yet, month after month, the same problems recur: invoices fail to match, payments go out incorrectly, period-end closes drag on, and auditors raise flags. The culprit, almost universally, is not the ERP itself. It is the master data living inside it.

Master data which has your vendor records, customer records, material masters, GL accounts, cost centers, tax classifications, is the connective tissue of every financial transaction in SAP S/4HANA. When that tissue is frayed, every downstream process suffers. When master data governance is weak or absent, even the most sophisticated ERP becomes a sophisticated source of errors.

This article examines precisely why master data problems persist in SAP S/4HANA environments, what they cost finance operations in practice, and how finance leaders are finally gaining the tools to fix them, not just patch them.

What Is Master Data in SAP S/4HANA, and Why Does It Matter So Much?

Before diagnosing the problem, it is worth establishing what master data actually means inside an SAP S/4HANA context, because the term is often used loosely.

In S/4HANA, master data refers to the core reference records that govern how business transactions are processed and recorded. The major categories are:

Vendor Master (now Supplier in S/4HANA's Business Partner model): Stores all information about a supplier like their legal name, address, payment terms, bank details, tax classification, purchasing organization data, and company code–specific accounting data. Every purchase order, every invoice, and every payment references this record.

Customer Master (also merged into Business Partner): Contains billing addresses, payment terms, dunning configuration, credit limits, and revenue account assignments. It governs how order-to-cash transactions are executed.

Material Master: Describes goods and services the company buys or sells including valuation class, purchasing unit of measure, tax indicator, and GL account determination. Errors here cascade directly into costing and inventory valuation.

GL Account Master / Chart of Accounts: Defines the accounts to which every transaction is posted. Structural inconsistencies here are duplicate accounts, missing tax relevance flags, incorrect field status configurations which create reporting gaps that auditors and controllers struggle to reconcile. For a deeper look at how these structures affect operations, the Chart of Accounts and GL Coding for SAP resource from Hyperbots provides extensive context.

Cost Center and Profit Center Masters: Control how costs and revenues are allocated internally. Misassigned or outdated cost centers result in misleading management reports.

Tax Master Data: Includes tax codes, jurisdiction codes, and condition records that determine how VAT, sales tax, and use tax are calculated on transactions. The relationship between sales and use tax origins and destination locations alone introduces enormous complexity that master data must capture correctly.

The reason master data matters so disproportionately is straightforward: SAP S/4HANA is a transaction-processing engine. It does exactly what the master data tells it to do. If the vendor master says payment terms are Net 60 but the contract says Net 30, SAP will pay in 60 days and the finance team will lose the discount opportunity every single time, without any system-generated warning. The ERP is not wrong. The data is wrong. And the finance team pays the price.

The Scale of Master Data Quality Issues in Finance Operations

Data quality issues in enterprise finance are not edge cases. They are pervasive. Gartner has estimated that poor data quality costs organizations an average of $12.9 million annually. For companies running SAP S/4HANA where the ERP is deeply embedded in every financial workflow, the cost is typically concentrated in several high-friction areas.

Duplicate vendor records are among the most common and costly. When the same supplier exists under multiple vendor IDs (created by different departments, different geographies, or during system migrations), three problems emerge simultaneously: duplicate payment risk rises sharply, spend analytics become unreliable, and vendor negotiation leverage is weakened because total spend is invisible. Understanding how to identify anomalies in payment terms for vendors is inseparable from having clean vendor master data in the first place.

Incorrect or missing bank account data causes payment failures and, in more serious cases, enables fraud. When vendor bank details are updated through email rather than through a controlled, authenticated process, which is still common in many organizations, the risk of business email compromise (BEC) fraud is substantial.

Stale tax classifications generate compliance exposure. If a vendor's tax exemption certificate expires and the master data is not updated, the company may either over-withhold or under-withhold tax, creating reconciliation headaches and potential penalties. The compliance implications of incorrect sales and use tax are significant, especially in multi-state US operations.

Inconsistent GL account structures create reporting fragmentation. In multi-entity SAP environments, the same type of expense may be coded to different GL accounts across company codes, making consolidated reporting time-consuming and prone to manual adjustment. The broader challenge of differences in Chart of Accounts across ERPs is a known pain point for any organization managing a complex or hybrid landscape.

Missing or incorrect cost center assignments cause budget variances that controllers have to chase down manually at period-end. When a purchase order is created against the wrong cost center which often happens because the requestor selected from a long dropdown without understanding the implications, the error propagates through goods receipt, invoice posting, and financial reporting.

Why SAP S/4HANA Migrations Don't Automatically Fix These Problems

One of the most persistent misconceptions in enterprise finance is that migrating to SAP S/4HANA will clean up historical data quality issues. The logic sounds plausible: a new platform means a fresh start, right? In practice, migration almost always makes legacy data quality issues more visible, and sometimes more severe.

There are several reasons why this happens.

Data migration is a lift-and-shift operation, not a cleansing operation. Most S/4HANA migrations use some form of extract, transform, load (ETL) process to bring legacy ERP data into the new system. If the legacy data contained 15,000 vendor records of which 3,000 were duplicates, the new system will inherit those 3,000 duplicates unless a deliberate data cleansing project is run in parallel which adds scope, cost, and timeline to already-stressed implementation projects. In practice, cleansing is frequently deferred.

The Business Partner model in S/4HANA introduces new governance requirements. S/4HANA consolidates the vendor and customer masters into the Business Partner (BP) object. This is architecturally sound, it eliminates the historical separation between vendor and customer records for the same entity but it requires organizations to re-establish governance processes for BP creation, change, and archiving. Organizations that migrate without establishing these processes find themselves creating new data quality issues in the new model.

The Universal Journal creates new sensitivity to master data errors. S/4HANA's Universal Journal (table ACDOCA) combines what were previously separate ledgers such as FI, CO, ML into a single source of truth. This is powerful for reporting, but it means that a GL account assignment error, a cost object error, or a tax code error now propagates simultaneously across financial accounting, controlling, and profitability analysis. In the old architecture, these were separate tables that could be corrected independently. In S/4HANA, the error is unified and so is the correction effort.

FIORI app proliferation introduces new data entry points. The SAP Fiori interface dramatically lowers the barrier to creating and modifying master data records, which is often cited as a benefit. But more entry points with insufficient validation means more ways for incorrect data to enter the system. Without strong master data governance workflows like mandatory approval steps, duplicate checks, completeness validation, Fiori accelerates both good and bad data creation.

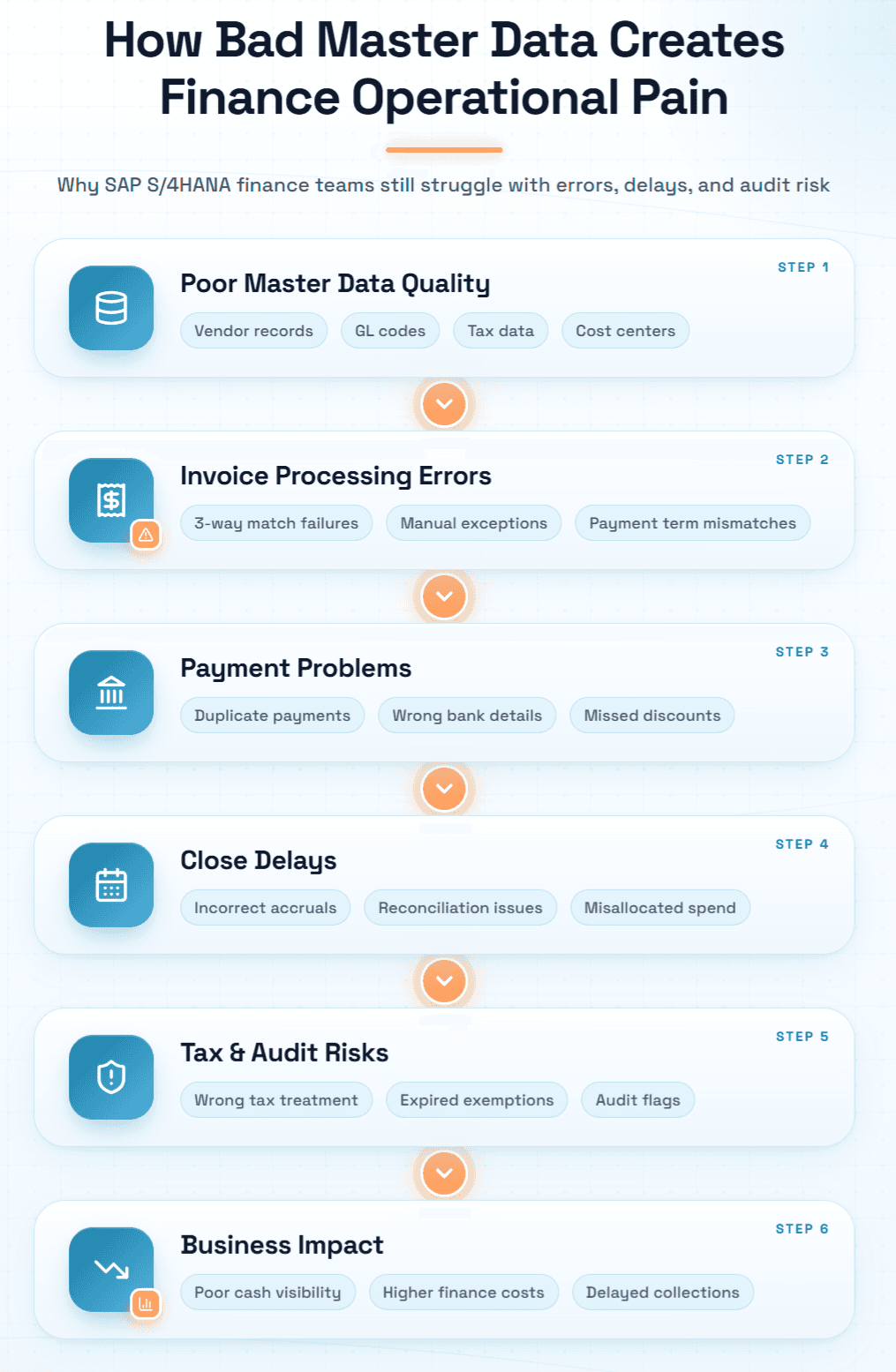

The Six Areas Where Finance Data Quality Issues Cause Real Operational Pain

1. Invoice Processing and Three-Way Matching

Invoice processing is where master data quality issues inflict the most immediate, measurable pain. The challenges that prevent straight-through invoice processing are numerous, but master data failures account for a significant share of them.

When the vendor master payment terms do not match the invoice, the system flags an exception. When the material master unit of measure does not match the invoice unit, three-way matching fails. When the purchase order was created with an incorrect price or quantity which often happens because the vendor master catalog data was stale, the match tolerance is breached, and a human must investigate. For organizations processing thousands of invoices monthly, even a 15% exception rate translates to hundreds of hours of manual resolution work every month.

The types of matching strategies for invoices, POs, and GRNs matter enormously here. Two-way, three-way, and four-way matching all depend on clean reference data. Without it, every matching strategy generates noise rather than assurance.

2. Vendor Payments

Payment errors driven by master data failures come in several forms. Payments to incorrect bank accounts are the most dangerous. Payments made at wrong terms, too early (losing cash float) or too late (incurring penalties), are the most costly in aggregate. Payments made to duplicate vendors are embarrassing and require manual recovery.

The strategic opportunity cost is also significant. Organizations that have clean payment terms data and good cash visibility can systematically capture early payment discounts. Those operating with dirty data are flying blind, they cannot confidently identify which invoices qualify for discount, so they either miss them or process them case-by-case at the cost of significant manual effort.

3. Period-End Close

The month-end and quarter-end close process is where master data quality issues accumulate into acute operational pain. Controllers find GL account balances that cannot be explained because cost center assignments were inconsistent. Accruals cannot be confidently estimated because the services received but not invoiced list is unreliable, if vendor records are incomplete, the system cannot correctly identify open commitments. Intercompany reconciliation fails because entity codes are applied inconsistently.

Navigating the complexities of accruals in accounts payable resource captures exactly how these failures manifest at period-end.

4. Tax Compliance

Tax compliance is unforgiving of master data errors. In a US multi-state context, the correct tax treatment of a vendor invoice depends on the origin address, the destination address, the product/service category, and any applicable exemption certificates. All of this is reference data that must be accurately maintained in the system. When the vendor's address in the master record is a corporate headquarters rather than the actual ship-from location, tax calculations are wrong from the start.

5. Financial Reporting and Audit

Auditors and internal controls teams rely on the assumption that master data reflects reality. When it does, not when vendor names differ between the ERP and the actual legal entity, when cost centers no longer correspond to active organizational units, when GL accounts have been used inconsistently, the audit becomes a manual reconciliation exercise. The impact of GL coding on financial reporting and audits is substantial and often underestimated during planning.

6. Order-to-Cash Cycle

Data quality issues affect not just the procure-to-pay side but the entire order-to-cash process. Customer master errors, incorrect addresses, wrong payment terms, missing credit limits, outdated contact information which cause billing disputes, failed cash applications, and delayed collections. When an invoice goes out with the wrong remittance address or the wrong bank details because the customer record was not updated after an acquisition, the resulting confusion can delay payment by weeks.

Root Causes: Why Master Data Governance Remains Weak

Understanding why master data quality issues persist despite the availability of governance tools requires an honest examination of organizational dynamics, not just technical gaps.

Governance is treated as an IT problem, not a finance problem. In most organizations, master data governance is nominally owned by IT or an MDM team, but the business processes that create and use master data are owned by finance, procurement, and operations. When governance breaks down, each side assumes the other is responsible for fixing it. Finance complains that the data is wrong; IT says it only loads what the business provides.

Data stewardship is not resourced. Effective master data governance requires dedicated data stewards who have both the authority to enforce data standards and the time to review and correct records. Most organizations do not have this role filled. Responsibility is diffused across AP teams, procurement teams, and shared services, none of whom see data quality management as their primary job.

Change management processes are inconsistently applied. Many organizations have formal processes for creating new master data records, vendor onboarding workflows, for example but informal processes for changing them. A vendor calls the AP team with new bank details, and the AP team updates the record directly, without any verification or approval step. The vendor onboarding challenges and advantages of AI discussion is directly relevant here: the same rigor applied at onboarding must extend to change management throughout the vendor lifecycle.

Legacy migration debt is never fully resolved. As discussed above, data cleansing is frequently deferred during S/4HANA migration. Once the system goes live, the urgency to cleanse legacy data evaporates, the business is operational, and there is no compelling event to drive a cleanup project. The debt compounds over time as new transactions reference old, dirty records.

Redundant GL codes proliferate without governance. One of the most pervasive and least-discussed data quality issues in SAP environments is the growth of redundant and duplicate GL codes in the chart of accounts. Over time, as new business needs emerge, new GL accounts are created ad hoc often without checking whether an appropriate account already exists. The result is a chart of accounts that is simultaneously too granular in some areas and too coarse in others, making consistent coding impossible.

The Hidden Cost Cascade: What Bad Master Data Actually Costs

The costs of poor master data governance are both direct and indirect, and the indirect costs are typically larger.

Direct costs include duplicate payments (industry estimates range from 0.1% to 0.5% of total invoice spend), late payment penalties, lost early payment discounts (typically 1–2% of eligible invoice value), and tax compliance penalties. For a company processing $500 million in annual payables, even a 0.1% duplicate payment rate represents $500,000 in recoverable overpayments and recovery is rarely complete.

Indirect costs are harder to quantify but more damaging. When AP teams spend 30–40% of their time resolving exceptions caused by master data mismatches, the opportunity cost is enormous and that time could be spent on strategic cash management, supplier relationship development, or analytics. When finance controllers spend the last week of every month chasing down GL coding errors and cost center misassignments, the close cycle extends, and the quality of management reporting suffers. When auditors find control weaknesses related to vendor master maintenance, remediation programs consume hundreds of person-hours.

The ROI on AI-led automation in finance framework captures many of these cost dimensions in a structured way and the analysis consistently shows that master data quality improvement is among the highest-leverage investments available to finance organizations.

What Good Master Data Governance Actually Looks Like

It is worth spending time on what effective master data governance looks like in practice, because it is frequently misunderstood as a purely technical exercise when it is fundamentally a process and organizational discipline.

A single ownership model. Every master data object such as vendor, customer, material, GL account, should have a named owner who is accountable for its accuracy. This is not a committee; it is an individual with the authority to approve changes and the responsibility to manage the quality of the records under their stewardship.

Automated completeness and consistency checks at the point of creation. Rather than relying on training to ensure data quality at creation, the system should enforce completeness. Mandatory fields, validation rules, duplicate checking, and cross-field consistency checks should be embedded in the creation workflow. The best practices for approvals of purchase requisitions and purchase orders framework is instructive: approval workflows can embed data quality checks at every step.

A controlled change management process. Every change to a master data record particularly for fields that affect financial transactions, such as bank details, tax codes, and payment terms should require documented justification, multi-level approval, and an audit trail. This is the single most important control for preventing payment fraud and tax compliance errors.

Periodic review and archiving. Inactive master data records from vendors with whom no purchase has been made in two years, customers who have not placed an order in 18 months, cost centers that correspond to dissolved organizational units, should be reviewed regularly and either updated or flagged for archiving. The maintaining, reviewing, and auditing the Chart of Accounts discipline is a model for how this review cadence should work.

Integration between master data and transactional processes. Governance cannot be a side process that runs independently of operations. When an invoice arrives with vendor details that do not match the master record, the discrepancy should be surfaced immediately, not after the invoice has been posted. When a payment is about to be made to a bank account that was changed within the last 30 days, a heightened verification step should be triggered automatically. Governance and operations must be integrated, not separate.

The Role of AI in Solving Systemic Master Data Problems

The governance frameworks described above are sound in theory, but in practice they are difficult to sustain at scale. Manual review processes are slow. Data stewards are expensive. Rule-based validation catches known error patterns but misses novel ones. And the volume of master data changes in a large SAP S/4HANA environment, hundreds or thousands per month, makes manual oversight genuinely impractical.

This is where AI that is designed specifically for finance operations, changes the calculus.

AI systems can analyze historical transaction data to identify patterns that suggest master data errors, vendors with inconsistently applied payment terms, GL accounts used for inconsistent expense types, tax codes applied to categories they do not match and surface these as data quality issues for human review, rather than waiting for an auditor to find them. AI can perform real-time duplicate detection using fuzzy matching like identifying that "ACME Corp." and "Acme Corporation" in two different vendor records are likely the same entity, even when the exact name strings differ. AI can learn from the corrections that data stewards make and apply those learnings to future validation, progressively reducing the manual review burden.

More broadly, AI can serve as the connective tissue between master data governance and transactional finance operations which ensures the data quality principles that governance defines are actually enforced at the moment every transaction is processed, not discovered after the fact. Understanding how AI complements ERP systems rather than replacing them is essential context for any finance leader considering this path.

The agentic AI revolution in finance is not simply about automating existing workflows. It is about creating systems that actively monitor data quality, surface anomalies, enforce governance rules in real time, and continuously improve their accuracy through feedback something that human-only governance teams cannot achieve at the scale modern finance operations require.

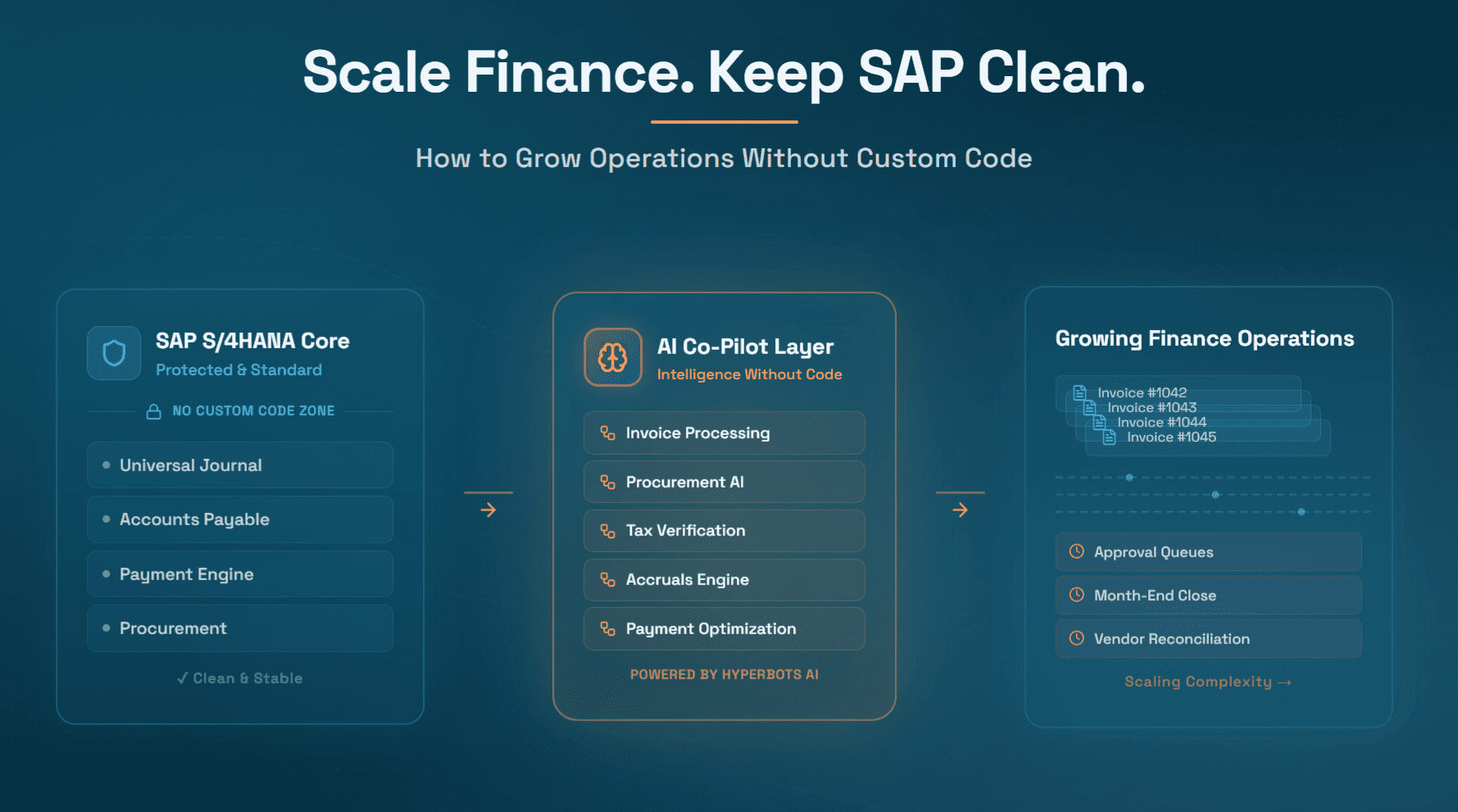

Hyperbots: How AI Co-Pilots Address Master Data-Driven Finance Failures

This brings us to the practical question that every finance leader eventually arrives at: given the scale and persistence of these problems, what does a solution actually look like?

Hyperbots has built the most comprehensive suite of AI co-pilots purpose-designed for finance and accounting operations. These are not generic automation tools or robotic process automation wrappers applied to SAP workflows. They are AI-native, finance-specific agents that understand the structure of financial data, the logic of financial processes, and the governance requirements of finance operations. The result is a platform that reduces operational costs by up to 80% while dramatically improving the accuracy and compliance of every process it touches.

Critically, Hyperbots co-pilots do not require clean master data before they can deliver value. They are designed to operate in the real-world conditions of enterprise SAP environments where master data is imperfect, processes are inconsistent, and exceptions are the norm rather than the exception. They surface data quality issues as they process transactions, making master data governance an active, ongoing function rather than a periodic project.

Invoice Processing Co-Pilot: Eliminating Matching Failures at Scale

The Invoice Processing Co-Pilot addresses what is, for most SAP S/4HANA users, the single largest operational cost center in finance: invoice processing and exception management.

The co-pilot achieves 99.8% extraction accuracy across structured and unstructured invoice formats without template dependency. This matters enormously in practice because vendor invoices are never truly standardized. The same supplier may send PDF invoices one month and EDI transactions the next. New suppliers have formats the system has never seen before. The co-pilot handles all of these without manual setup.

But the deeper value lies in what happens when master data and invoice data diverge. Rather than simply flagging a mismatch and routing to a human, the co-pilot intelligently identifies the source of the discrepancy is the vendor master payment term outdated? Is the unit of measure on the material master inconsistent with how the vendor invoices? and surfaces the specific master data record that needs correction. Over time, this converts exception management from a reactive cost center into a proactive data quality improvement engine.

The co-pilot achieves 80%+ straight-through processing rates, meaning 80% of invoices are processed from receipt to GL posting with zero human touch. For an organization processing 10,000 invoices monthly, that means 8,000 invoices handled automatically, freeing the AP team to focus on the 2,000 that genuinely require judgment.

Vendor Management Co-Pilot: Governance Built Into the Workflow

The Vendor Management Co-Pilot addresses the governance gap at the heart of most vendor master data problems: the lack of controlled, auditable processes for vendor creation, change, and verification.

The co-pilot enforces structured onboarding workflows like document collection, identity verification, bank account validation, tax classification confirmation that ensure every new vendor record in SAP S/4HANA is complete, accurate, and compliant before the first transaction is processed. It extends this rigor to change management: when a vendor submits a bank account change request, the co-pilot routes it through an authenticated verification workflow before any update is made to the master record.

The benefit finance teams experience most immediately is fraud prevention. BEC fraud via fake vendor bank account change requests has cost enterprises billions of dollars globally. The vendor management co-pilot's controlled change workflow with multi-factor verification and approval routing, eliminates the attack vector that makes this fraud possible.

Beyond security, the co-pilot maintains vendor relationship quality over time. It monitors vendor communication, tracks discrepancies between invoiced and agreed terms, and surfaces vendors whose data is becoming stale, driving the periodic review discipline that most organizations struggle to maintain manually.

Procurement Co-Pilot: Clean Data from the Point of Origination

The Procurement Co-Pilot addresses a principle that every data quality practitioner understands: it is far cheaper to prevent a master data error at the point of origination than to correct it downstream. For procure-to-pay operations, the point of origination is the purchase requisition.

The co-pilot automates PR creation with intelligent field population, drawing on vendor master data, material master data, cost center assignments, and GL account mappings to pre-fill fields correctly. Budget validation checks prevent commitments against exhausted or wrong cost centers before the PO is ever created. Policy checks flag requisitions that violate approval thresholds or preferred vendor lists.

The practical effect is that purchase orders entering SAP S/4HANA through the Procurement Co-Pilot carry clean, validated master data references from the start. This dramatically reduces the rate of three-way matching exceptions downstream because the PO was built on good data to begin with. The PR-to-PO cycle compression from three days to four hours is one measurable outcome; the reduction in downstream exceptions is an equally valuable but less obvious one.

Sales Tax Verification Co-Pilot: Automating the Most Complex Reference Data Problem in Finance

Sales and use tax compliance is arguably the area where master data quality issues create the most concentrated regulatory risk. The Sales Tax Verification Co-Pilot addresses this directly by automating the validation of tax treatment on every vendor invoice, checking origin and destination jurisdiction, product/service category classification, applicable rates, and exemption certificate status in real time.

The practical value is illustrated by the documented case of a CFO who eliminated $200,000 in annual tax leakage using this co-pilot. The leakage was not the result of intentional noncompliance; it was the result of stale tax classifications in the vendor and material masters, combined with manual review processes that could not keep pace with invoice volume. The co-pilot found and flagged every misclassified invoice, enabling recovery of overpaid tax and prevention of future leakage.

Payments Co-Pilot: Intelligent Cash Management on Top of Clean Execution

The Payments Co-Pilot transforms payment execution from a transactional process into a strategic one. It does not simply execute payments based on due dates from the master data; it analyzes cash position, vendor relationship context, early payment discount opportunities, and payment terms to recommend optimal payment timing for each invoice.

The financial impact of this capability is substantial. Organizations that capture even 50% of available early payment discounts at a 2/10 Net 30 structure on $100 million of eligible payables recover $1 million annually. The co-pilot makes this systematic rather than opportunistic, it identifies every discount opportunity, validates the vendor master data supporting it, and routes the recommendation through the appropriate approval workflow.

Fraud prevention is equally important. The co-pilot's anomaly detection identifies payment requests that deviate from established patterns, unusual beneficiaries, amounts inconsistent with historical invoicing, payment instructions that differ from master data and holds them for review before funds are released.

Accruals Co-Pilot: Period-End Accuracy Without Manual Effort

The Accruals Co-Pilot directly addresses one of the most painful consequences of master data quality issues: unreliable period-end accruals. It automatically discovers unbilled liabilities such as goods received but not invoiced, services delivered but not billed, recurring expenses with no active PO by analyzing open purchase orders, delivery confirmations, and contract data in SAP S/4HANA.

The benefit is a faster, more accurate close. Finance controllers who previously spent the last three days of every month manually identifying and estimating accruals, with constant uncertainty about what they were missing, now have a co-pilot that surfaces complete accrual candidates automatically, books the journal entries with AI-suggested GL coding and cost object assignments, and reverses them automatically in the following period. Month-end close cycles that previously ran 7–10 days compress to 3–5 days, and the quality of accruals improves measurably.

Collections Co-Pilot and Cash Application Co-Pilot: Completing the Finance Data Loop

On the order-to-cash side, the Collections Co-Pilot and Cash Application Co-Pilot address the mirror-image of the P2P data quality challenge: customer master data failures that prevent timely cash collection and accurate payment application.

The Cash Application Co-Pilot automatically matches incoming customer payments to open invoices — handling remittance advice in any format, including unstructured emails and portals, with AI-driven matching logic that tolerates partial payments, short payments, and multi-invoice remittances. The result is a dramatic reduction in unapplied cash, one of the most common sources of balance sheet inaccuracy and dispute management overhead.

The Collections Co-Pilot uses customer master data, payment history, credit terms, relationship tier, dispute history, to prioritize collection activity intelligently, draft customer-appropriate communication, and escalate overdue accounts according to configurable policy. Finance teams that previously managed collections manually against aging reports see DSO reductions of 15–25% with consistent co-pilot deployment.

Hyperbots Platform Capabilities: Transformational Impact at Scale

The individual co-pilots described above are powerful on their own. But the transformational impact of Hyperbots comes from its platform architecture, which enables these co-pilots to work together as a coordinated system rather than as isolated point solutions.

The Hyperbots platform is built on several capabilities that collectively change what is possible for finance operations:

AI-native architecture: Every co-pilot is built on AI-native foundations, not rules-based automation with an AI layer on top. This means the co-pilots reason about finance data the way a trained finance professional would, identifying anomalies, making judgment calls under uncertainty, and improving their accuracy over time through self-learning feedback loops. The self-learning capability means accuracy improves continuously as the system processes more transactions, without manual retraining.

Pre-trained, ready-to-deploy models: Hyperbots' co-pilots are pre-trained on finance-specific data, enabling organizations to go live in days rather than months. Finance teams that went live quickly using pre-trained AI co-pilots report dramatically faster time-to-value compared to traditional automation implementations.

Company-specific and industry-specific configuration: The platform supports company-specific policy configuration ensuring that every approval workflow, matching tolerance, tax rule, and payment threshold reflects the organization's actual policies rather than generic defaults. This is combined with industry-specific AI configurations that understand the particular data patterns and process requirements of different sectors.

Unlimited user access: Hyperbots operates on an unlimited-user licensing model, enabling organization-wide deployment without the per-seat cost structures that typically limit adoption. Finance teams, procurement teams, operations teams, and shared services organizations can all access the co-pilots without license management overhead.

24/7 continuous operations: The co-pilots operate 24 hours a day, 7 days a week, processing invoices, managing vendor communications, monitoring payment queues, and surfacing accrual candidates without downtime. This is particularly valuable for organizations with global operations across multiple time zones.

Human-in-the-loop oversight: Rather than fully autonomous black-box processing, Hyperbots' human-in-the-loop model ensures that human judgment is applied at the right moments, complex exceptions, high-value transactions, policy edge cases, while routine processing runs without manual intervention. This balances efficiency with the governance requirements of financial operations.

Deep ERP integration: Hyperbots integrates directly with SAP S/4HANA, SAP Business One, Oracle NetSuite, Microsoft Dynamics, QuickBooks, Sage, and many other platforms. The integration architecture enables bidirectional data flow — the co-pilots read master data from the ERP and write validated, enriched transaction data back, creating a continuous improvement loop rather than a one-way automation layer.

Hyperbots ROI: Tangible and Intangible Improvements in P2P and O2C

The ROI delivered by Hyperbots across procure-to-pay and order-to-cash is measurable in both hard financial metrics and operational quality improvements.

Tangible P2P ROI:

80% reduction in operational processing costs across AP and procurement workflows

99.8% invoice extraction accuracy, eliminating rework costs from data entry errors

80%+ straight-through processing rates, reducing per-invoice processing cost by 60–70%

Systematic early payment discount capture as organizations consistently recover 1–2% of eligible invoice spend

Near-elimination of duplicate payments, typically recovering 0.1–0.5% of total payables spend

Fraud loss prevention through controlled vendor bank account change workflows and anomaly detection in payment processing

Extreme Reach achieved 80% straight-through processing at 99.8% accuracy with zero manual touch-ups, a documented customer outcome

Tax leakage recovery: documented cases of $200,000+ annual recoveries through AI-driven sales tax verification

Tangible O2C ROI:

DSO reduction of 15–25% through intelligent, data-driven collections prioritization

Unapplied cash reduction of 80% through automated cash application matching

Dispute resolution cycle time reduction of 40–50% through better customer master data quality

Intangible benefits:

Finance team capacity redirected from transaction processing to strategic analysis

Audit readiness significantly improved through comprehensive, automated audit trails on every transaction and master data change

Month-end close cycle compression of 30–40%, reducing stress and improving reporting timeliness

Vendor relationship quality improvement through reliable, transparent payment communication

Risk posture improvement through systematic anomaly detection and governance enforcement

Finance leaders can quantify their own expected ROI using Hyperbots' suite of ROI calculators, which model specific savings scenarios for invoice processing, procurement, payments, collections, cash application, accruals, and vendor management.

Industry-Specific Applications of Hyperbots Co-Pilots

Master data quality issues manifest differently across industries and Hyperbots' industry-specific configurations reflect this reality.

Manufacturing: Manufacturing environments face particular challenges with material master data, complex BOM structures, multi-site procurement, and PO-heavy invoice volumes where three-way matching accuracy is critical. Hyperbots' manufacturing industry capabilities are configured for high-volume, PO-matched invoice processing with tight goods receipt integration, and for the specific accruals complexity of manufacturing operations with large volumes of goods-received-but-not-invoiced items.

Professional Services: Services firms deal with a different data quality challenge, time-and-material invoices that do not map neatly to POs, complex project cost structures, and high dependency on accurate GL coding for project profitability reporting. Hyperbots' configurations for professional services support matching strategies for open-ended service contracts and intelligent GL coding based on project context.

Retail: Retail finance operations involve high transaction volumes, complex vendor terms, and the particular challenge of managing seasonal payment cycles and promotional discount structures. Hyperbots' retail industry positioning reflects this, with configurations optimized for high-volume, multi-vendor, multi-location operations.

Healthcare: Healthcare procurement faces the specific compliance burden of medical supply chain requirements such as vendor credentialing, product classification, and the particular sensitivity of any payment error in a regulated environment. The healthcare procurement automation applications of Hyperbots' co-pilots address these requirements directly.

The platform's flexibility across these industry contexts reflects a core design principle: policy-driven AI that drives productivity gains because it understands and enforces the specific rules that matter for each organization, in each industry, rather than applying generic automation logic.

Frequently Asked Questions

Q1: Can Hyperbots co-pilots work with SAP S/4HANA out of the box, or is extensive configuration required?

Hyperbots co-pilots are designed with pre-built ERP connectors and pre-trained AI models that enable rapid deployment against SAP S/4HANA environments. Most organizations are live within weeks, not months, without requiring custom development. Faster onboarding with ERP integration is a documented capability of the platform.

Q2: Do the co-pilots require clean master data to start delivering value?

No. This is a common misconception. Hyperbots co-pilots are designed to operate in real-world conditions where master data is imperfect. They surface data quality issues as they process transactions, enabling iterative improvement rather than requiring a prior cleansing project. The co-pilots help finance teams discover and fix master data problems through normal operations.

Q3: How does Hyperbots handle the human approval requirements that finance governance demands?

The platform's human-in-the-loop architecture ensures that human judgment is required for exceptions, high-value transactions, and policy edge cases with full audit trails documenting every decision. Routine, validated transactions process automatically. This balance is configurable to match each organization's risk tolerance and governance requirements.

Q4: What measurable impact do organizations see on master data quality after deploying Hyperbots?

Organizations typically see measurable improvement in vendor master data completeness (through structured onboarding and change management workflows), reduction in GL coding errors (through AI-assisted coding with policy validation), and reduction in tax code misapplication (through automated sales tax verification). Over time, the exception rate on invoice processing declines as the co-pilots surface and drive resolution of systemic master data issues.

Q5: Is Hyperbots suitable for mid-market organizations or only large enterprises?

The unlimited-user licensing model and pre-trained deployment approach make Hyperbots accessible and ROI-positive for mid-market organizations as well as large enterprises. The platform scales from organizations processing a few thousand invoices per month to those processing hundreds of thousands. The multi-agent collaboration in finance and accounting architecture ensures that co-pilots scale with organizational complexity.

Q6: How does Hyperbots position relative to other AI finance automation platforms?

Hyperbots is designed specifically for finance and accounting operations, with AI models trained on finance-specific data and process logic. Unlike generic automation platforms or spend management tools that extend into finance, Hyperbots covers the full P2P and O2C lifecycle with purpose-built co-pilots for each sub-process. The AI-native architecture, reasoning-based rather than rule-based, delivers higher accuracy, better exception handling, and continuous self-improvement that rule-based tools cannot match.

Q7: Can finance teams calculate their expected ROI before committing to a full deployment?

Yes. Hyperbots provides a comprehensive suite of ROI calculators, for invoice processing, procurement, payments, vendor management, collections, cash application, accruals, and more, available at hyperbots.com/roi-calculators. Organizations can model their specific expected savings before deployment.

Master Data Governance Is a Finance Priority, Not an IT Project

The evidence is clear: master data quality issues in SAP S/4HANA are not technical problems waiting for an IT fix. They are finance operations problems that manifest every day in invoice exceptions, payment errors, period-end delays, compliance risks, and audit findings. They persist because governance has been treated as a separate discipline from operations, a project to be done once rather than a process to be embedded everywhere.

The most effective response to this challenge combines three elements: clear ownership and governance frameworks for master data, ERP configurations that enforce data quality at the point of creation and change, and AI co-pilots that bring governance discipline to every transaction at operational scale.

Hyperbots delivers that third element and in doing so, it makes the first two elements more achievable, because the feedback loop from co-pilot processing surfaces the specific data quality issues that governance must address. Finance teams that deploy Hyperbots do not just automate their operations; they progressively improve the quality of the finance data foundation that every financial decision depends on.

For finance leaders ready to close the gap between what SAP S/4HANA promises and what it actually delivers, the starting point is not another data cleansing project. It is deploying AI co-pilots that make master data governance a continuous, operational reality. Request a personalized demo to see how Hyperbots co-pilots perform in your SAP environment, or explore the free trial to begin measuring the impact firsthand.